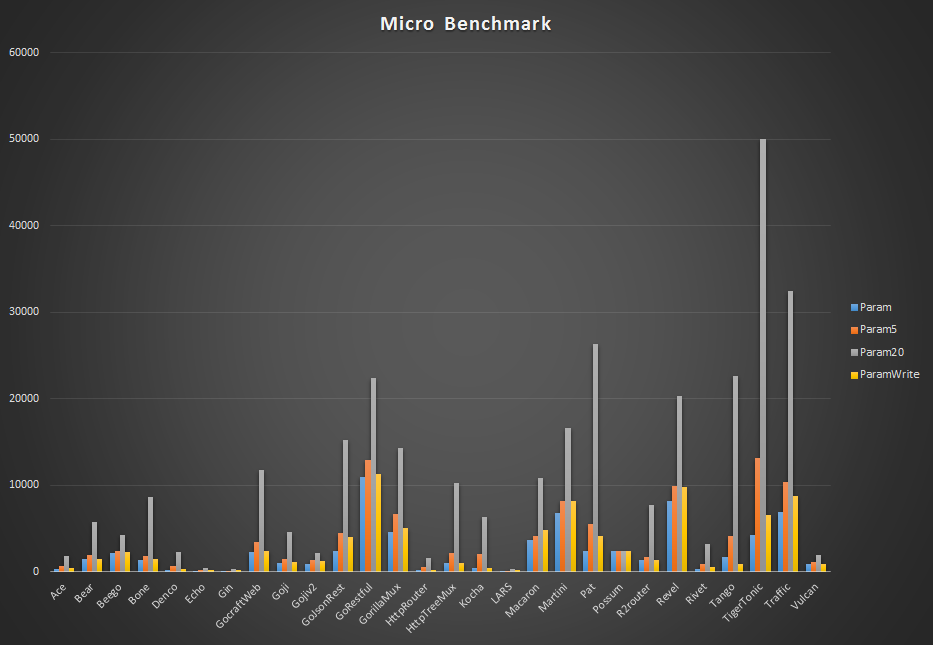

The values of the parameters must be passed to the handler function somehow, which requires allocations. The intention is to see how the routers scale with the number of parameters. Same as before, but now with multiple parameters, all in the same single route. Then a request for a URL matching this pattern is made and the router has to call the respective registered handler function.

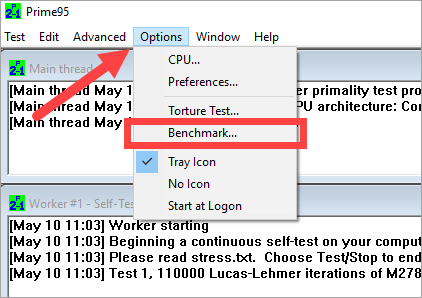

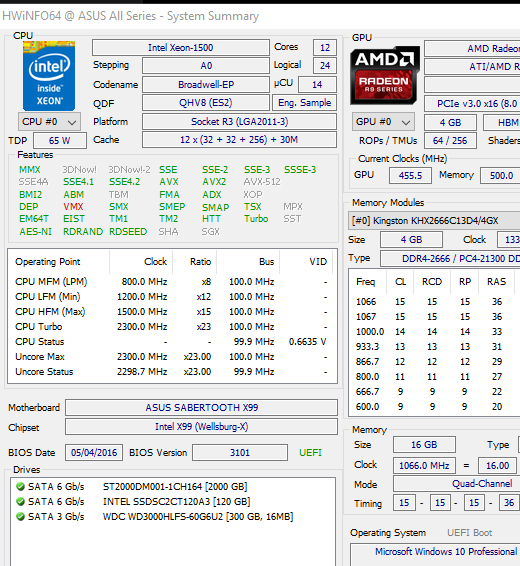

In the first benchmark, only a single route, containing a parameter, is loaded into the routers. The following benchmarks measure the cost of some very basic operations. The fastest router only needs 1.8% of the time http.ServeMux needs. The logs below show, that http.ServeMux has only medium performance, compared to more feature-rich routers. The third number is the amount of heap memory allocated in bytes, the last one the average number of allocations made per repetition.

The second column shows the time in nanoseconds that a single repetition takes. If you are unfamiliar with the go test -bench tool, the first number is the number of repetitions the go test tool made, to get a test running long enough for measurements. In the StaticAll benchmark each of 157 URLs is called once per repetition (op, operation). The only intention of this benchmark is to allow a comparison with the default router of Go's net/http package, http.ServeMux, which is limited to static routes and does not support parameters in the route pattern.

It might not be a realistic URL-structure. It is just a collection of random static paths inspired by the structure of the Go directory. The Static benchmark is not really a clone of a real-world API. I'm pretty sure you can detect a pattern Router The best 3 values for each test are bold. The following table shows the memory required only for loading the routing structure for the respective API. So keep in mind, that I am not completely unbiased Resultsīesides the micro-benchmarks, there are 3 sets of benchmarks where we play around with clones of some real-world APIs, and one benchmark with static routes only, to allow a comparison with http.ServeMux. In fact, this benchmark suite started as part of the packages tests, but was then extended to a generic benchmark suite. Personally, I prefer slim and optimized software, which is why I implemented HttpRouter, which is also tested here. If you care about performance, this benchmark can maybe help you find the right router, which scales with your application. The frameworks are configured to do as little additional work as possible. But since we are only interested in decent request routing, I think this is not entirely unfair. This benchmark tries to measure their overhead.īeware that we are comparing apples to oranges here, we compare feature-rich frameworks to packages with simple routing functionality only. Lately more and more bloated frameworks pop up, outdoing one another in the number of features. Moreover, many of them are very wasteful with memory allocations, which can become a problem in a language with Garbage Collection like Go, since every (heap) allocation results in more work for the Garbage Collector. Unfortunately, most of the (early) routers use pretty bad routing algorithms. Since the default request multiplexer of Go's net/http package is very simple and limited, an accordingly high number of HTTP request routers exist. Go is a great language for web applications. Of course the tested routers can be used for any kind of HTTP request → handler function routing, not only (REST) APIs. Some of the APIs are slightly adapted, since they can not be implemented 1:1 in some of the routers. This benchmark suite aims to compare the performance of HTTP request routers for Go by implementing the routing structure of some real world APIs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed